Vendor Proposal Evaluation Template: A Practical Guide

Three proposals are due this afternoon. One vendor sends a polished PDF with pricing buried in an appendix. Another sends a Word document full of tracked changes. The third sends a slide deck that looks sharp but leaves basic delivery terms vague. By the time your team has copied costs, service levels, milestones, and exceptions into one spreadsheet, half the room is already arguing about which proposal is “stronger.”

That’s the actual problem with vendor selection. It’s rarely the final scoring sheet. It’s the messy work required before scoring can even start.

That pain is widespread. A 2025 Gartner report indicates that 68% of procurement teams spend over 40% of their evaluation time on data extraction from unstructured documents, with 52% reporting accuracy issues below 85% due to manual copy-paste methods according to Inventive’s summary of the Gartner finding. If your current process feels slow and fragile, that’s why.

A usable vendor proposal evaluation template solves only half of this. The other half is getting every vendor’s data into a clean, comparable structure before the committee starts scoring. That’s where automation matters. Teams evaluating AI tooling for this kind of prep work can also review SupportGPT's AI comparison insights to think more clearly about model trade-offs behind parsing and analysis workflows.

Beyond Apples-to-Oranges A Smarter Way to Evaluate Vendors

Most evaluation guides assume vendors respond in neat rows and columns. They don’t. Real proposals arrive as scans, brochures, email attachments, revised schedules, pricing tables pasted as images, and legal terms hidden deep in attachments.

A strong process fixes this in two stages. First, normalize the information. Second, score it.

Start with structure, not opinion

When teams skip normalization, they usually do one of two things. They compare surface impressions, or they let the most readable proposal win. Neither is reliable.

The better approach is simple:

- Gate obvious disqualifiers first. Missing certifications, unacceptable commercial terms, or incomplete responses shouldn't make it to detailed scoring.

- Extract comparable fields. Pricing, implementation timeline, security posture, support model, references, assumptions, and exclusions should sit in the same columns for every vendor.

- Apply a shared scoring rubric. Reviewers score against the same definitions instead of reacting to writing style.

The clean spreadsheet is not the evaluation. It is the condition that makes fair evaluation possible.

What a smarter workflow changes

A practical vendor proposal evaluation template gives procurement a defensible record. It shows what was measured, how it was weighted, and why one vendor ranked above another. That matters when stakeholders challenge the outcome or a losing vendor asks for a debrief.

It also changes the tone of review meetings. Instead of debating what a vendor “probably meant,” teams can discuss trade-offs that matter. One vendor may have stronger capabilities. Another may have tighter contract controls. A third may be cheaper but weak on service support. Those are real procurement judgments.

When the process works, procurement stops acting like a data-entry function and starts acting like a decision function.

Get Your Free Vendor Proposal Evaluation Template

The template should be boring in the best way. It should be easy to open, easy to explain, and hard to misuse. That usually means an Excel or Google Sheets file with a clear layout and locked formulas where needed.

A solid working version usually includes three tabs:

Criteria tab

This is the control panel. You list the evaluation categories, define what each criterion means, assign weights, and note any mandatory requirements. If your team changes the rubric halfway through, this tab shows exactly what changed.

Vendor scoring tabs

Each vendor gets a dedicated sheet. Reviewers enter raw scores, add comments, and capture concerns tied to specific criteria. Nuance is best captured within this framework. If a vendor’s implementation plan looks workable but understaffed, that note should live next to the score, not in someone’s inbox.

Summary dashboard

This is the executive view. It rolls up weighted scores, highlights gaps, and makes side-by-side comparison possible without stripping away the detail underneath. Decision-makers can see the ranking quickly, then drill into why that ranking happened.

Practical rule: If a stakeholder can’t trace a summary score back to the line-item justification, the template isn’t finished.

Use the template as a working document, not a static form. Add comment columns. Add a compliance flag. Add a “clarification needed” field. The best template is the one your team will use under deadline pressure.

How to Customize Your Evaluation Criteria

A generic template is a starting point, not a decision framework. If you evaluate a facilities contractor the same way you evaluate a software implementation partner, your scorecard will look tidy and still produce a bad decision.

The criteria need to reflect the purchase, the risk profile, and the internal teams who will live with the outcome.

Effective vendor assessment processes require evaluation templates to address 30+ substantive questions distributed across three primary assessment categories focusing on vendor capabilities, reliability, and partnership suitability, covering everything from quality control to invoice accuracy according to Pointerpro’s vendor assessment guidance. That’s a useful benchmark because it forces teams to go beyond price and polished presentations.

Build criteria around the decision you actually need to make

Three broad buckets work well in practice:

-

Capability fit

Can the vendor do the work as scoped. This includes technical approach, delivery model, staffing, tools, quality controls, and relevant experience. -

Operational reliability

Will they execute consistently. This covers timeline realism, support responsiveness, reporting discipline, issue handling, invoicing accuracy, and process maturity. -

Partnership fit

Will they work well with your organization after contract signature. This includes communication style, escalation process, change-order posture, governance, and willingness to improve over time.

A lot of weak evaluations overweight the first bucket because the proposal itself makes capability easiest to see. Reliability and partnership fit usually show up in the details. Exceptions, assumptions, response clarity, and handoff quality often tell you more than the executive summary does.

Ask better questions

A useful vendor proposal evaluation template doesn’t just list categories. It breaks them into answerable prompts.

Examples that work:

- For scope clarity: What has the vendor explicitly excluded?

- For implementation: Are milestones specific enough to validate staffing and timing?

- For service model: Who owns escalation, and how is support delivered after go-live?

- For commercial review: Are change-order rates clear and reasonable?

- For controls: Does the proposal explain how errors, rework, or nonconforming deliverables are handled?

For teams refining their scorecard design, this guide to request for proposal evaluation criteria is a useful companion because it helps translate broad priorities into scoreable questions.

Customize by stakeholder, not by habit

Procurement should not write every criterion alone. Finance, legal, operations, IT, security, and business owners each see different failure points.

Use short stakeholder interviews before finalizing the template. Ask each group two things:

- What would make this vendor unusable after selection?

- What would make this vendor expensive even if the quoted price looks fine?

Those answers usually reveal the hidden criteria your first draft missed.

If evaluators need to “remember to consider” something important, it belongs in the template.

A final caution. Don’t turn the file into a museum of every criterion you’ve ever used. If a line item won’t influence a decision, remove it. The point is disciplined comparison, not administrative theater.

Creating a Fair and Defensible Scoring System

Defining criteria is one task. Scoring them fairly is another. Plenty of teams get the categories right and still create noise because reviewers interpret the scale differently or because the weighting doesn’t match the organization’s priorities.

A scoring system becomes defensible when it does three things well. It reflects what matters most, it forces consistent judgment, and it leaves an audit trail.

Use weighting to reflect business reality

Not every criterion deserves equal importance. Industry best practice shows that effective RFP evaluation matrices often use weights such as Technical Expertise (25%), Capabilities (40%), Data Security (10%), HR Policies (10%), and Diversity & Sustainability (15%), paired with a 0 to 5 scoring scale to reduce subjectivity, as outlined in Responsive’s RFP evaluation criteria guidance.

That exact split won’t fit every procurement event, but the principle is sound. Weighting should reflect the cost of being wrong.

If you’re buying a strategic service, capabilities may deserve the heaviest weight. If you’re buying into a regulated environment, security and compliance may move higher. If a vendor will interact directly with your customers or employees, service and support should not sit at the margins.

Define the scoring scale before review begins

A 0 to 5 scale works well because it’s detailed enough to separate vendors without pretending to be mathematically precise.

Use clear definitions:

- 5 = Very Good. Exceeds expectations.

- 4 = Good. Meets expectations.

- 3 = Standard. Meets most expectations.

- 2 = Adequate. Meets some expectations.

- 1 = Unsatisfactory. Misses most expectations.

- 0 = Substandard. Falls far below expectations.

That language matters. Without it, one evaluator uses 3 as “acceptable,” another treats 3 as “borderline,” and the totals become unreliable.

Score evidence, not confidence

Procurement teams often drift into scoring how persuasive a proposal feels. That’s risky. The smoother writer gets rewarded over the stronger operator.

Score against evidence instead:

- Specificity over broad claims

- Documented process over verbal reassurance

- Clear exclusions and assumptions over polished generalities

- Support model detail over generic “white-glove service” language

A vague answer should not receive an average score just because the vendor has a strong brand.

Sample scored evaluation criteria

Below is a simple example of how one vendor’s raw scores convert into weighted results.

| Criterion | Weight | Vendor A Score (0-5) | Weighted Score |

|---|---|---|---|

| Technical Expertise | 25% | 4 | 1.00 |

| Capabilities | 40% | 5 | 2.00 |

| Data Security | 10% | 3 | 0.30 |

| HR Policies | 10% | 4 | 0.40 |

| Diversity & Sustainability | 15% | 2 | 0.30 |

The point of this table isn’t the math. It’s the discipline. A vendor can be outstanding in one area and still lose if weaknesses appear in criteria that matter more.

Add written justification for every non-obvious score

A strong vendor proposal evaluation template leaves room for comments beside the score, not just at the bottom of the sheet. If an evaluator gives a 2 for support quality, there should be a short reason tied to the proposal. “No defined escalation path” is useful. “Didn’t feel strong” is not.

Good comment practices include:

- Reference the proposal content rather than memory

- Name the gap rather than writing general criticism

- Capture assumptions that need clarification later

- Flag disputed scores for review meeting discussion

Use multiple evaluators, then reconcile

Single-reviewer scoring is fast, but it usually amplifies blind spots. Multi-stakeholder scoring is slower, yet much stronger when the purchase affects more than one function.

Don’t ask every reviewer to score every line item. Ask people to score the areas they understand. Security should score security. Finance should score commercial clarity. Operations should score delivery practicality. Procurement should coordinate the process and challenge inconsistencies.

Reconciliation matters too. If scores vary sharply, review the evidence together. Sometimes one evaluator caught a real issue. Other times the rubric needs tightening. Either way, that conversation improves the integrity of the final result.

Common Pitfalls and How to Avoid Them

Most bad vendor decisions don’t fail because the spreadsheet was missing a formula. They fail because the evaluation process rewarded the wrong signals.

One proposal looked cheap. Another sounded impressive. A third met most needs but got knocked out for reasons nobody documented clearly. Those errors are avoidable if the process has guardrails.

Lowest price is not lowest cost

The easiest trap to spot is also the one teams keep walking into. They overweight quoted price and underweight service gaps, exclusions, and weak support.

That’s expensive. Research shows organizations that focus solely on the lowest cost experience 9-in-10 cost overruns due to service gaps and poor support, according to The RFP Success Company’s evaluation guidance. If the low bidder needs constant intervention, submits frequent change requests, or can’t support the implementation properly, the savings disappear fast.

A better question is not “Who is cheapest?” It’s “Whose proposal gives us the strongest cost-value position with acceptable execution risk?”

Skip non-compliant vendors early

Another common mistake is letting every proposal flow into detailed scoring even when some vendors miss mandatory requirements. That wastes evaluator time and creates confusion later.

Use a compliance-first gate. Check mandatory items before committee scoring begins. This can include technical specifications, essential commercial terms, required certifications, and qualification thresholds. If a proposal fails a mandatory requirement, document the reason and stop there.

That gatekeeping approach is supported in P25 Best Practice guidance on proposal evaluation and vendor selection, which describes a two-step compliance framework and notes that this can reduce proposal evaluation cycle time by an estimated 20% to 30% when non-compliant vendors are screened out early.

Compliance review is not bureaucracy. It protects the team from spending hours debating a vendor who was never eligible to win.

Don’t let one evaluator dominate

When one person reads all proposals and assigns most of the scores, the process may feel efficient, but it becomes fragile. Procurement sees commercial issues. IT sees architecture risk. Operations sees delivery friction. Finance sees cost leakage. One person rarely sees all of it well.

Build a small evaluation team and assign criteria by expertise. Then reconcile differences in a structured meeting. This produces better decisions and gives the final outcome more credibility when challenged internally.

Watch for proposal formatting bias

A polished proposal can distort judgment. Teams often forgive weak substance because the document is easy to read. They penalize a strong but messy proposal because key details are harder to find.

That’s exactly why normalization matters before scoring. When data sits in a common structure, the committee reviews vendor content, not vendor formatting skill.

Keep the record clean

Many organizations still make a reasonable choice but document it poorly. Months later, nobody remembers why Vendor B lost by a narrow margin or why a low score was assigned in security review.

Keep the evaluation file complete. Save the criteria version used, score comments, compliance notes, clarification responses, and final rationale. A clean record helps with vendor debriefs, future renewals, audits, and the next sourcing event.

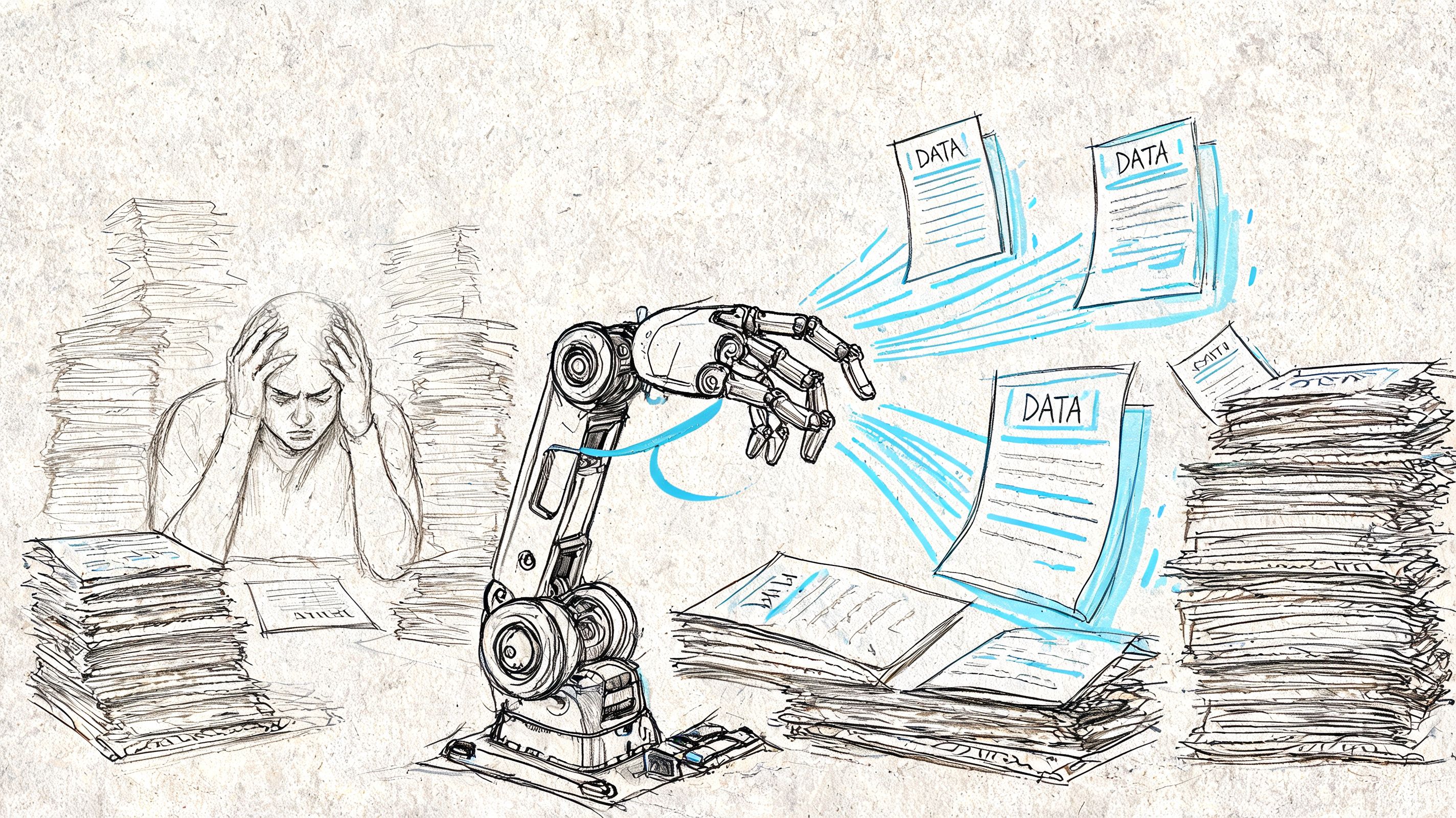

Speed Up Evaluation with Automated Data Extraction

The hidden bottleneck in vendor evaluation isn’t usually scoring. It’s preparing proposals for scoring.

If pricing is trapped in one PDF, support terms sit in a Word attachment, and implementation assumptions appear in scanned appendices, your team spends hours rebuilding structured data by hand. That’s exactly the gap most template articles ignore.

Use parsing before scoring

The practical fix is to insert an extraction layer before the evaluation template. Upload the proposals, identify the fields you need, and export structured tables that feed the scorecard.

That’s where tools such as DocParseMagic fit. It’s a no-code document parsing platform that can extract fields like pricing tables, line items, dates, terms, and policy details from PDFs, Word files, scans, and photos into spreadsheet-ready outputs. For procurement teams dealing with messy proposal packages, that means less copying and fewer transcription errors.

The workflow is straightforward:

- Collect the files in their original formats

- Parse key fields such as pricing, service levels, milestones, payment terms, and exclusions

- Export to a common table

- Load the data into the vendor proposal evaluation template for scoring and comments

Teams looking at this workflow in more detail can review this guide to automated data extraction for document-heavy processes.

Why automation improves judgment, not just speed

Automation matters for more than efficiency. It also reduces the chance that a reviewer misses something buried in inconsistent formatting.

That’s important because a 2026 PwC survey found 61% of sourcing teams faced vendor-related disruptions from unassessed risks, manual evaluations missed 44% of latent vulnerabilities, and hybrid AI-human scoring improved decision accuracy by 37% according to the cited vendor evaluation summary on Scribd.

Human review still matters. Procurement should decide what counts, how it’s weighted, and when exceptions are acceptable. But AI-assisted extraction can surface the relevant data faster and in a more comparable form.

A short walkthrough helps make the workflow concrete:

The key shift is this. Don’t ask evaluators to become part-time data clerks. Ask them to review structured evidence and make a decision.

From Tedious Comparison to Strategic Decision

A good vendor proposal evaluation template does more than organize scores. It protects the decision. It gives procurement a repeatable method, gives stakeholders a common language, and gives leadership a clear explanation of why one vendor was selected over another.

The part many teams miss is the preparation layer. When proposals arrive in messy formats, the quality of the final decision depends on how well you convert unstructured content into clean comparison data. That step determines whether your process is objective or just looks objective.

The strongest procurement teams combine three habits. They screen for compliance early. They score with weighted criteria and written justification. They automate the extraction work that slows everyone down.

If you want to tighten the full process after selection too, these vendor management best practices are a useful next step.

A cleaner workflow doesn’t just save time. It lets procurement spend more of its effort on trade-offs, risk, and supplier fit. That’s where the core value sits.

If you want to stop copying data out of PDFs and start evaluating vendors from clean, structured tables, try DocParseMagic. It helps teams turn messy proposals, invoices, statements, and other business documents into spreadsheet-ready data so the scoring process starts with evidence instead of manual cleanup.